[ad_1]

Much of the research on the genocide against Tutsi communities has neglected the testimonies of survivors.Credit: Chip Somodevilla/Getty

This month marks 30 years since the start of the 1994 genocide against Rwanda’s Tutsi communities. Around 800,000 Tutsi were killed by armed Hutu militia and citizens over 100 days. Members of the Hutu and Twa communities also died, in what some scholars call the worst atrocity of the late twentieth century.

This 30th anniversary is a poignant reminder of many things, but perhaps first and foremost of the international community’s failure to intervene and stop the killings. Massacres of Tutsi people had been happening for decades before 1994, but calls for help from inside Rwanda were ignored, with horrific consequences.

After the genocide: what scientists are learning from Rwanda

This week, in a News Feature commemorating the anniversary of the atrocity, Nature has spoken to researchers about what has been learnt about the genocide, the consequences for its survivors and its aftermath. Lessons from studying a specific genocide can be applicable to many events that involve conflict.

The 1948 Convention on the Prevention and Punishment of the Crime of Genocide, adopted after the Second World War, defines genocide as “an act committed with intent to destroy, in whole or in part, a national, ethnical, racial or religious group”. It is, the convention states, an “odious scourge” that “at all periods of history … has inflicted great losses on humanity”.

Genocide is incredibly difficult to study. The hardest question of all concerns a genocide’s origins: how wars and violence can escalate to genocidal acts. At the same time, genocide studies is not one discipline. It spans the political and social sciences, anthropology, biology, economics, history, law, medicine, sociology and more. Researchers bring individual disciplinary insights, but must also collaborate. Nature heard from researchers studying peace-building between communities affected by the genocide, and learnt about mental-health approaches that have helped survivors. We also spoke to scientists who have studied how the trauma from the event has marked the DNA of survivors and their children. Intergenerational trauma — trauma relating to the genocide that affects younger generations who did not directly experience it — remains a challenge for mental-health services in Rwanda. But this is a legacy of all atrocities, and one that societies should be prepared for.

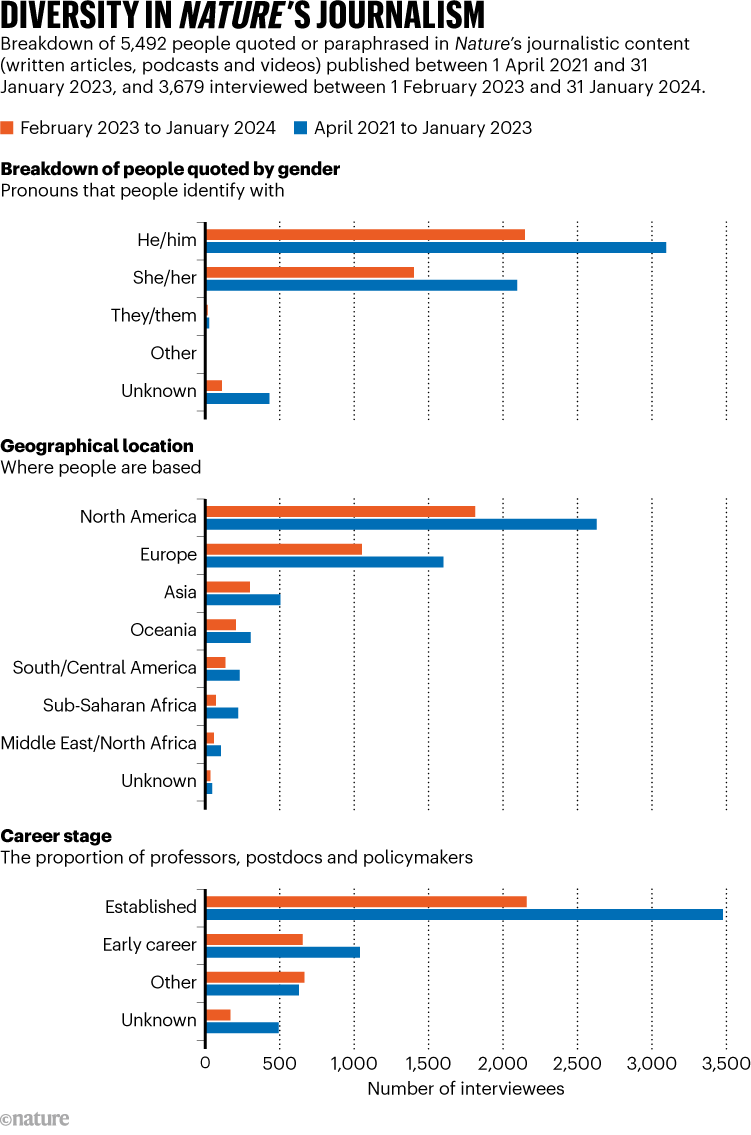

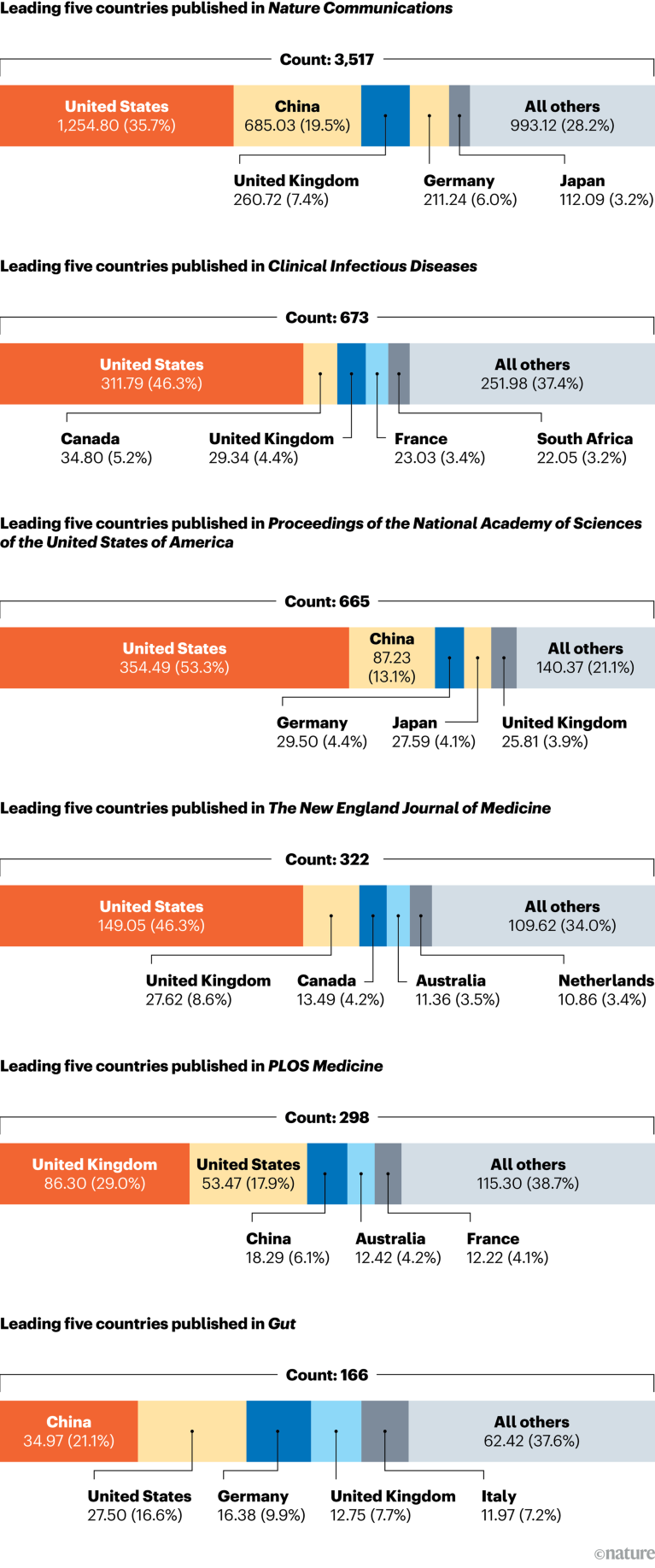

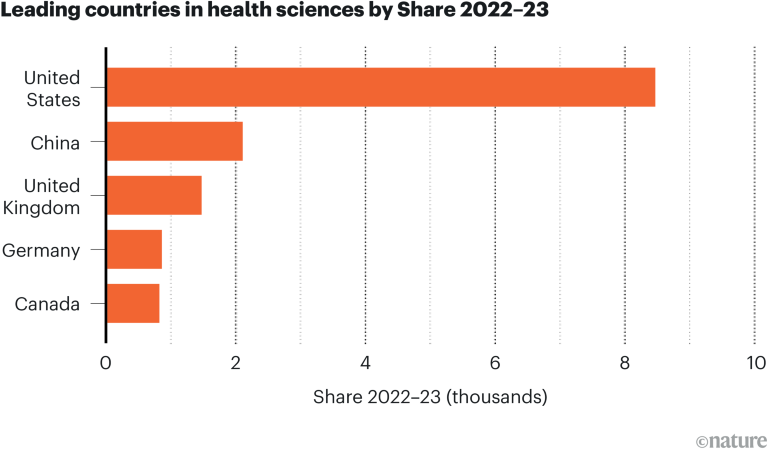

North–south publishing data show stark inequities in global research

In Rwanda’s case, the genocide nearly wiped out the country’s academic community; until recently, the study of the atrocity had largely been done by researchers from other countries. Rwanda’s scholars have re-established themselves and must be supported so they can lead the study of genocide, political violence and beyond. The country already hosts some of Africa’s notable research institutions, including a chapter of the African Institute for Mathematical Sciences in Kigali and the African Medicines Agency, soon to be established in the capital.

Researchers in African countries face many barriers. They consistently report that international journals are too quick to reject their submissions. Some told Nature that this might be because of a perception that research from low-income nations or countries with limited academic autonomy is of low quality. One exceptional effort that is helping to overcome these barriers is the Research, Policy and Higher Education programme, focused on Rwanda. Now a decade old and launched by the UK-based charity Aegis Trust in Nottingham, this programme invites Rwandan scholars to submit research proposals; external researchers support them with advice and expertise to get the works published in international venues, such as peer-reviewed journals. The resulting works are collected in a resource called the Genocide Research Hub.

So far, more than 40 scholars have published dozens of journal articles, book chapters and working papers. Some studies have already influenced Rwandan policy relating to the genocide. For example, Rwandan scholar Munyurangabo Benda, a philosopher of religion at the Queen’s Foundation, an ecumenical college in Birmingham, UK, investigated feelings of guilt among children of Hutu perpetrators born after the genocide. A peace-building project that involved this generation of children grew into a nationwide programme on reconciliation. Benda’s academic research played a part in broadening the programme’s offerings.

Rwanda: From killing fields to technopolis

In the immediate aftermath of atrocities, focus is often put on perpetrators, as legal organizations seek to make convictions and secure justice. But, in the study of genocide, it is imperative to listen to survivors, to establish their needs and how they can be supported, and also to ensure that their testimonies and experiences are not lost.

Much of the research on the genocide against the Tutsi has neglected the testimonies of survivors, particularly women, says Noam Schimmel, a scholar of international studies and human rights at the University of California, Berkeley. Survivors need to be given opportunities to share and write about their own perspectives and experiences — whether in literature, as part of research or in journalism — which can help to overcome isolation and marginalization, and to improve their well-being and welfare.

As atrocities continue to unfold around the world, researchers can learn from Rwanda. Those in positions of responsibility must allow researchers from affected countries to lead where they can, and to elevate the voices of survivors. In doing so, they will bring a deeper level of experience that might allow us to better study and understand these heinous acts. We might still be far from answers — but greater knowledge can only help to shine more light on this darkest of places.

[ad_2]

Source link