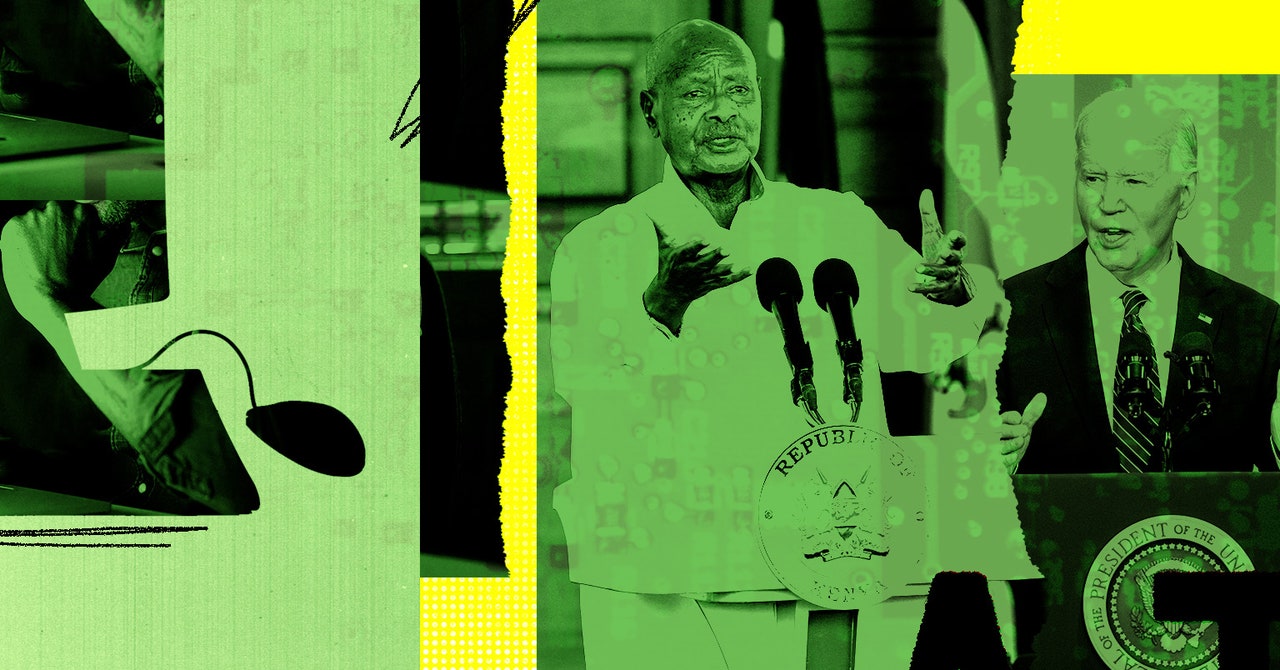

AI projects like OpenAI’s ChatGPT get part of their savvy from some of the lowest-paid workers in the tech industry—contractors often in poor countries paid small sums to correct chatbots and label images. On Wednesday, 97 African workers who do AI training work or online content moderation for companies like Meta and OpenAI published an open letter to President Biden, demanding that US tech companies stop “systemically abusing and exploiting African workers.”

Most of the letter’s signatories are from Kenya, a hub for tech outsourcing, whose president, William Ruto, is visiting the US this week. The workers allege that the practices of companies like Meta, OpenAI, and data provider Scale AI “amount to modern day slavery.” The companies did not immediately respond to a request for comment.

A typical workday for African tech contractors, the letter says, involves “watching murder and beheadings, child abuse and rape, pornography and bestiality, often for more than 8 hours a day.” Pay is often less than $2 per hour, it says, and workers frequently end up with post-traumatic stress disorder, a well-documented issue among content moderators around the world.

The letter’s signatories say their work includes reviewing content on platforms like Facebook, TikTok, and Instagram, as well as labeling images and training chatbot responses for companies like OpenAI that are developing generative-AI technology. The workers are affiliated with the African Content Moderators Union, the first content moderators union on the continent, and a group founded by laid-off workers who previously trained AI technology for companies such as Scale AI, which sells datasets and data-labeling services to clients including OpenAI, Meta, and the US military. The letter was published on the site of the UK-based activist group Foxglove, which promotes tech-worker unions and equitable tech.

In March, the letter and news reports say, Scale AI abruptly banned people based in Kenya, Nigeria, and Pakistan from working on Remotasks, Scale AI’s platform for contract work. The letter says that these workers were cut off without notice and are “owed significant sums of unpaid wages.”

“When Remotasks shut down, it took our livelihoods out of our hands, the food out of our kitchens,” says Joan Kinyua, a member of the group of former Remotasks workers, in a statement to WIRED. “But Scale AI, the big company that ran the platform, gets away with it, because it’s based in San Francisco.”

Though the Biden administration has frequently described its approach to labor policy as “worker-centered.” The African workers’ letter argues that this has not extended to them, saying “we are treated as disposable.”

“You have the power to stop our exploitation by US companies, clean up this work and give us dignity and fair working conditions,” the letter says. “You can make sure there are good jobs for Kenyans too, not just Americans.”

Tech contractors in Kenya have filed lawsuits in recent years alleging that tech-outsourcing companies and their US clients such as Meta have treated workers illegally. Wednesday’s letter demands that Biden make sure that US tech companies engage with overseas tech workers, comply with local laws, and stop union-busting practices. It also suggests that tech companies “be held accountable in the US courts for their unlawful operations aboard, in particular for their human rights and labor violations.”

The letter comes just over a year after 150 workers formed the African Content Moderators Union. Meta promptly laid off all of its nearly 300 Kenya-based content moderators, workers say, effectively busting the fledgling union. The company is currently facing three lawsuits from more than 180 Kenyan workers, demanding more humane working conditions, freedom to organize, and payment of unpaid wages.

“Everyone wants to see more jobs in Kenya,” Kauna Malgwi, a member of the African Content Moderators Union steering committee, says. “But not at any cost. All we are asking for is dignified, fairly paid work that is safe and secure.”