[ad_1]

If you’re taking a flight to any country that’s a member of the European Union—and there are 27 of them—then there are some updated carry-on luggage rules you need to make yourself aware of before you turn up at the airport. When you pass through security, agents will ask you to remove liquids and electronics from your carry-on so they can be scanned.

In theory, these rule changes are only temporary: They’re a stopgap solution while we wait for the next generation of security scanners to go fully live. The implementation of these C3 scanners, which can properly analyze liquids and electronics so they don’t have to be taken out of your hand luggage, has been delayed beyond the original June 2024 deadline.

The official implementation date for these new carry-on baggage rules is September 1, 2024, so they are already in effect. There’s no fixed date as to when they will be relaxed, because there are a lot of factors at play. It’s likely the rules will be in place until at least the middle of 2025.

Which Airports Are Affected?

To be clear, these aren’t brand-new rules for your carry-on luggage. What’s happening is that EU airports are reverting to the previous set of rules about what types of things need to be taken out of your carry-on for inspection when you pass through security.

All airports in EU countries are affected, as are some airports in the UK (including Heathrow, Gatwick, Stansted, and Manchester) and airports in Iceland, Switzerland, Liechtenstein, and Norway.

Strictly speaking, only airports that have C3 scanners installed should be rolling back their rules; other airports that never installed the C3 scanners have continued to follow the old procedures. The rollout of the new tech has been costlier and taken longer than expected, and there are still bugs in the system—so the old security rules are once again required.

Officially, it’s a “tech issue” with the new equipment: Although the machines have been installed in a number of airports, it seems their scanning capabilities aren’t quite up to the high level required. While that gets sorted out, the scanners can’t be relied upon to spot dangerous contents in luggage.

Considering getting items in and out of bags always takes time, and bearing in mind that some passengers aren’t going to know exactly what they’re supposed to be doing, you might want to leave some extra time in your schedule to allow for queues and delays.

What Are the Rules?

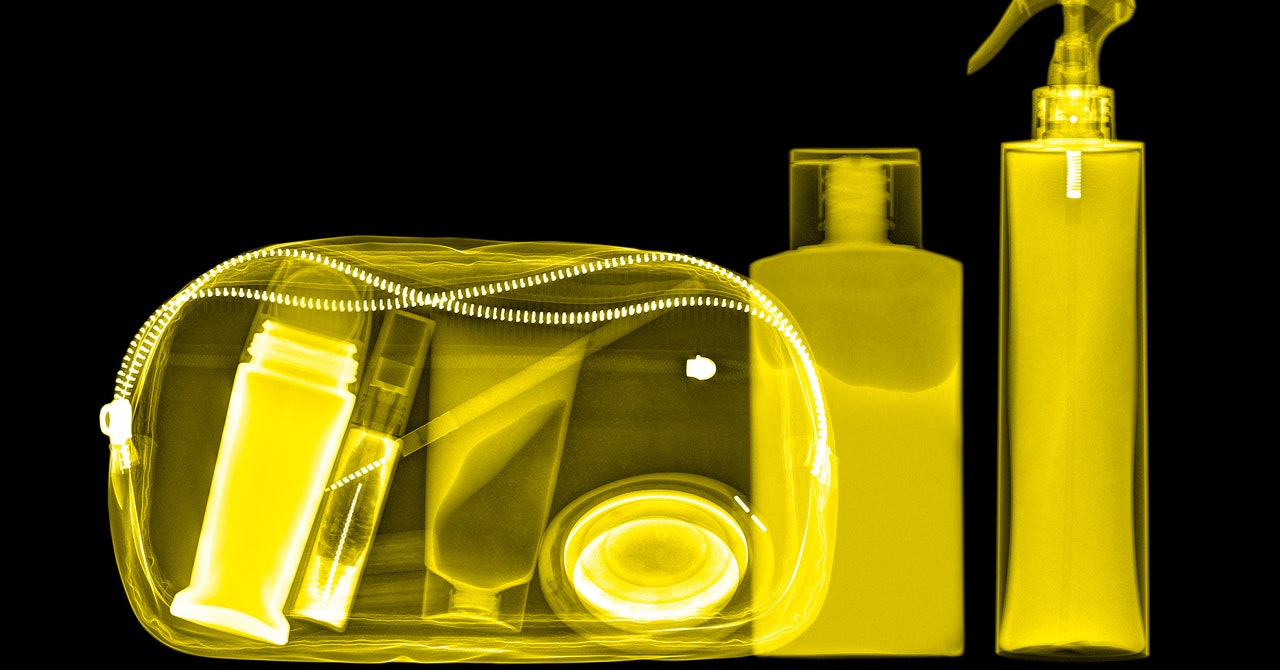

To guard against the threat of explosives, all liquids and electronics will need to be taken out of bags and scanned separately. In addition, liquids should be inside containers no bigger than 100 milliliters (3.4 liquid ounces) and are to be placed in a clear plastic bag of around 20 x 20 cm (7.9 x 7.9 inches).

This “100 ml rule” applies to all liquids, including (but not limited to) drinks, semiliquid foods like soups, cosmetics and toiletries, sprays, toothpaste, shower gel, hair gel, and contact lens solution. As usual, these liquids and typical electronics can be put in your checked luggage with no issue.

Exceptions to the 100-ml rule are sometimes made for those traveling with small babies and for those with special dietary and health requirements (including people who need to carry medication). If you fit into these categories, you must check in advance with the airport, and if you’re taking medication with you then you may need a doctor’s note.

For seasoned travelers, this is all going to be pretty familiar—but hopefully, as the new baggage scanners start to come online, the security checkpoint process at airports should become more streamlined and faster overall. If you’re in any doubt about the rules, check with your airline and the airport involved close to the time you’ve traveling.

Finally, a note on something that isn’t changing, at least not yet: While there have been rumors that the EU is going to apply rules on standardized case sizes for carry-on luggage, nothing has been decided. The idea has been discussed, but for the time being there’s no single size standard.

[ad_2]

Source link