Before Orkut launched in January 2004, Büyükkökten warned the team that the platform he’d built it on could handle only 200,000 users. It wouldn’t be able to scale. “They said, let’s just launch and see what happens,” he explains. The rest is online history. “It grew so fast. Before we knew it, we had millions of users,” he says.

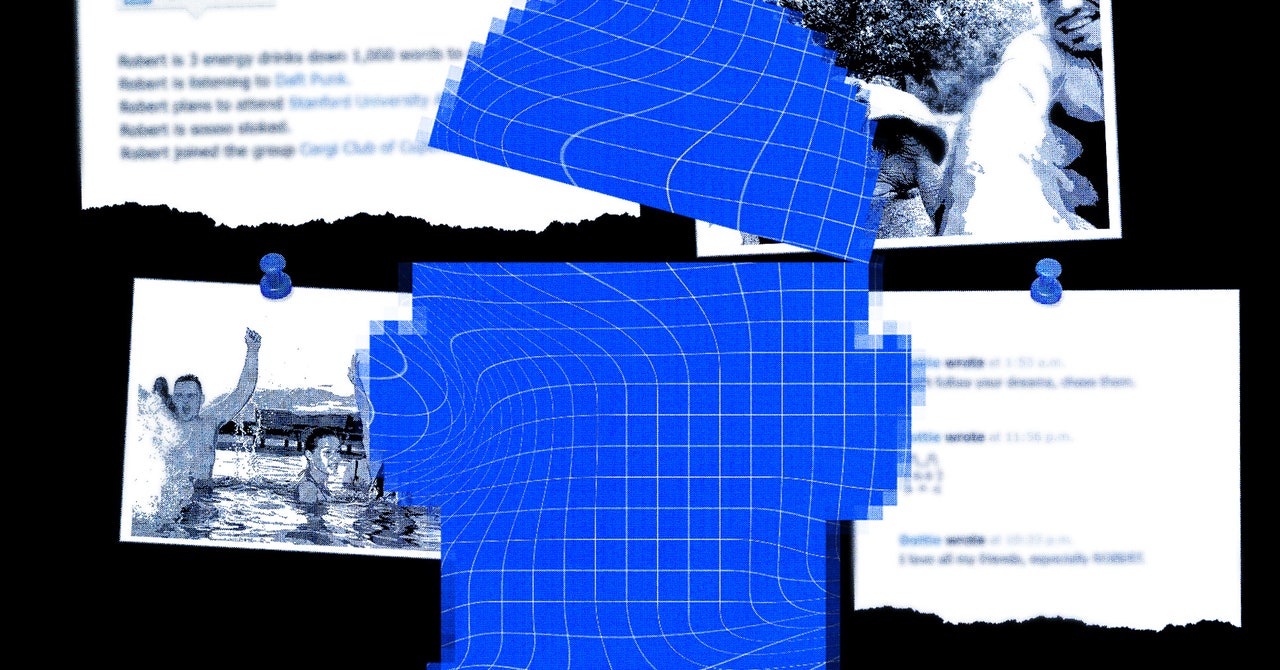

Orkut featured a digital Scrapbook and the ability to give people compliments (ranging from “trustworthy” to “sexy”), create communities, and curate your very own Crush List. “It reflected all of my personality traits. You could flatter people by saying how cool they were, but you could never say something negative about them,” he says.

At first, Orkut was popular in the US and Japan. But, as predicted, server issues severed its connection to its users. “We started having a lot of scalability issues and infrastructure problems,” Büyükkökten says. They were forced to rewrite the entire platform using C++, Java, and Google’s tools. The process took an entire year, and scores of original users dropped off due to sluggish speeds and one-too-many encounters with Orkut’s now-nostalgic “Bad, bad server, no donut for you” error message.

Around this time, though, the site became incredibly popular in Finland. Büyükkökten was bemused. “I couldn’t figure it out until I spoke to a friend who speaks Finnish. And he said: ‘Do you know what your name means?’ I didn’t. He told me that orkut means multiple orgasms.” Come again? “Yes, so in Finland, everyone thought they were signing up to an adult site. But then they would leave straight after as we couldn’t satisfy them,” he laughs.

Awkward double meanings aside, Orkut continued to spread across the world. In addition to exploding in Estonia, the platform went mega in India. Its true second home, though, was Brazil. “It became a huge success. A lot of people think I’m Brazilian because of this,” Büyükkökten explains. He has a theory about why Brazil went nuts for Orkut. “Brazil’s culture is very welcoming and friendly. It’s all about friendships and they care about connections. They’re also very early adopters of technology,” he says. At its peak, 11 million of Brazil’s 14 million internet users were on Orkut, most logging on through cybercafes. It took Facebook seven years to catch up.

But Orkut wasn’t without its problems (and many fake profiles). The site was banned in Iran and the United Arab Emirates. Government authorities in Brazil and India had concerns about drug-related content and child pornography, something Büyükkökten denies existed on Orkut. Brazilians coined the word orkutização to describe a social media site like Orkut becoming less cool after going mainstream. In 2014, having hemorrhaged users due to slow server speeds, Facebook’s more intuitive interface, and issues surrounding privacy, Orkut went offline. “Vic Gundotra, in charge of Google+, decided against having any competing social products,” Büyükkökten explains.

But Büyükkökten has fond memories. “We had so many stories of people falling in love and moving in together from different parts of the world. I have a friend in Canada who met his wife in Brazil through Orkut, a friend in New York who met his wife in Estonia and now they’re married with two kids.” he says. It also provided a platform for minority communities. “I was talking to a gay journalist from a small town in São Paulo who told me that finding all these LGBTQ people on Orkut transformed his life,” he adds.

Büyükkökten left Google in 2014 and founded a new social network, again featuring a simple five-letter title: Hello. He wanted to focus on positive connection. It used “loves” rather than likes, and users could choose from more than 100 personae, ranging from Cricket Fan to Fashion Enthusiast, and then were connected to like-minded people with common interests. Soft-launched in Brazil in 2018 with 2 million users, Hello enjoyed “ultra-high engagement” that Büyükkökten claims surpassed the likes of Instagram and Twitter. “One of the things that stood out in our user surveys was that people said when they open Hello, it makes them happy.”

The app was downloaded more than 2 million times—a fraction of the users Orkut enjoyed—but Büyükkökten is proud of it. “It surpassed all our dreams. There were numerous instances where our K-Factor (the number of new people that existing users bring to an app) reached 3, leading us to exponential growth,” he says. But, in 2020, Büyükkökten bid goodbye to Hello.

Now he’s working on a new platform. “It’ll leverage AI and machine learning to optimize for improving happiness, bringing people together, fostering communities, empowering users, and creating a better society,” he says. “Connection will be the cornerstone of design, interaction, product, and experience.” And the name? “If I told you the new brand, you would have an aha moment and everything would be crystal clear,” he says.

Once again, it’s driven by his enduring desire to connect people. “One of the biggest ills of society is the decline in social capital. After smartphones and the pandemic, we have stopped hanging out with our friends and don’t know our neighbors. We have a loneliness epidemic,” he says.

He is fiercely critical of current platforms. “My biggest passion in life is connecting people through technology. But when was the last time you met someone on social media? It’s creating shame, pessimism, division, depression, and anxiety,” he says. For Büyükkökten, optimism is more important than optimization. “These companies have engineered the algorithm for revenue,” he says. “But it’s been awful for mental health. The world is terrifying right now and a lot of that has come through social media. There’s so much hate,” he says.

Instead, he wants social media to be a place of love and a facilitator for meeting new people in person. But why will it work this time around? “That’s a really good question,” he says. “One thing that has been really consistent is that people miss Orkut right now.” It’s true—Brazilian social media has recently been abuzz with memes and memories to celebrate the site’s 20th birthday. “A teenage boy even recently drove 10 hours to meet me at a conference to talk about Orkut. And I was like, how is that even possible?” he laughs. Orkut’s landing page is still live, featuring an open letter calling for a social media utopia.

This, along with our collective desire for a more human social media, is what makes Büyükkökten believe that his next platform is one that will truly stick around. Has he decided on that all important name? “We haven’t announced it yet. But I’m really excited. I truly care. I want to bring that authenticity and sense of belonging back,” he concludes. Perhaps, as his Finnish fans would joke, it’s time for Orkut’s second coming.

This story first appeared in the July/August 2024 UK edition of WIRED magazine.