[ad_1]

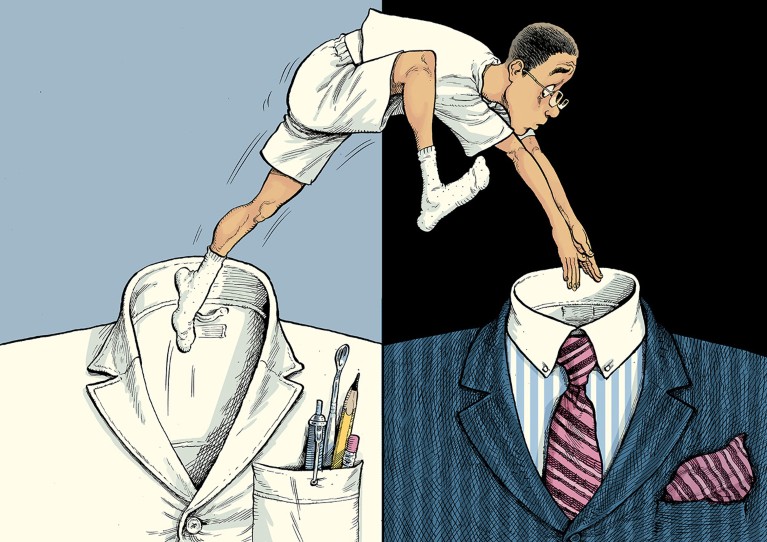

Illustration: David Parkins

The advice

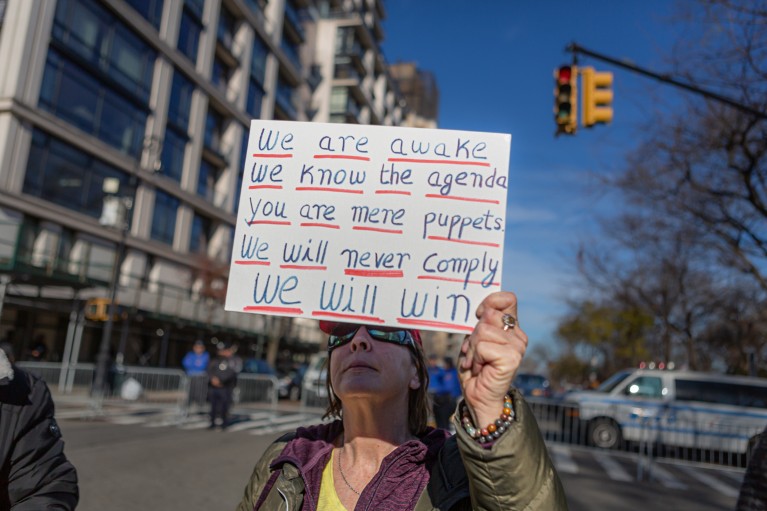

You’re not alone. The transition from technical roles to management is a common theme in the careers of scientists and engineers who work in industry. Deciding whether, when and how to make the move is a serious undertaking.

In industry, an individual contributor is someone ‘doing the work’ of research and development. They answer to a project manager or supervisor, but do not have anyone who answers to them. Although these jobs are what people tend to think of when they envision a scientist’s work in industry, companies often offer limited opportunities for promotion on this path.

Lack of chances for advancement in technical or hands-on roles can lead mid-career engineers and scientists to transition to management, even when they don’t have the skills, working style or inclination to succeed in a leadership role. One 2008 study1 found that mid-career engineers who felt ‘derailed’ in their career paths tended to be reluctant, under-prepared managers. They felt passed over for further promotion, experienced little satisfaction in their work and had a reduced sense of personal effectiveness in their work.

Nature reached out to three scientists for guidance on how to approach this kind of career crossroads.

Know yourself

Roni Wright is a molecular biologist who runs a laboratory group at the International University of Catalonia in Barcelona, Spain. She also runs workshops, courses and one-on-one training in career development for scientists at the Barcelona Biomedical Research Park, which brings together research institutes based in the city. The company you work for might have something similar — large organizations and research centres often offer career-development resources for their employees. Wright suggests that the first step is to carry out a self-assessment, reflecting on your skills, working style and values.

You asked about personality tests. These are hotly debated scientifically, but can be helpful starting points for self-reflection, providing some insight into your behavioural patterns and decision-making style, and thus are often used by large employers to encourage such thinking. Wright suggests that the Myers–Briggs Type Indicator (MBTI) and DISC assessment are popular places to start. The MBTI was developed by US writers Katharine Cook Briggs and Isabel Briggs Meyers in 1944, inspired by the work of Swiss psychologist and psychotherapist Carl Jung. Through a series of about 90 questions, the MBTI evaluates the test-taker’s preferences in four aspects of personality (introversion–extraversion, sensing–intuition, thinking–feeling and judging–perceiving) and sorts them into one of 16 types.

The human costs of the research-assessment culture

DISC assessments, based on the DISC personality theory developed by US psychologist William Moulton Marston in the 1920s, are specifically geared towards workplace interaction. They categorize the test-taker according to four personality profiles — which Marston called dominance, inducement, submission and compliance — to help them understand their own working style and develop strategies for engaging with others. Since the 1940s, various companies have published assessments based on Marston’s theory, including the publishing company Wiley, with its test Everything DiSC, and Truity Psychometrics in Roseville, California. Most companies update the model and adapt the acronym to their own terminology.

Versions of both these self-assessments are available to take online for free.

Honest conversation

To get a clearer understanding of your own strengths and weaknesses, Nimrod Levin, a vocational psychologist and career-counselling specialist at the University of Lausanne, Switzerland, recommends getting an outside perspective. “Talk to people you trust at all levels of the organization — meaning people that are above you, at the same level and below you — and have an honest conversation about this career move,” suggests Levin. “How do they see it, what do they anticipate being a challenge for you and what do they see that would be an asset for you in one role or the other?” In this “360-degree reflective process”, some recurring themes are likely to reveal themselves.

Seven work–life balance tips from a part-time PhD student

Your question alludes to another important, and often-underestimated, factor — the people you would be working with. Levin says that, in his experience, “it’s often more the interpersonal environment, than the specific tasks of the job, that determine to what degree the person is happy”. Instead of framing this as choosing between two job titles, you could look at it as a choice between configurations of co-workers: the groups of people you would be working with and how you would relate to them in either role.

Personal situation

Jennifer Hunt offers a personal perspective on career shifts in your field. Hunt is a chemical engineer who worked in research and development for 33 years, first as an individual contributor and then as a project manager for contracts to develop hydrogen fuel cells. When the opportunity arose, she transitioned out of research and into a more people-focused role in applications engineering at Unison Energy. That career move helped Hunt, who is based in California, to find the financial stability she needed at that stage of her life. “I had two small kids. I didn’t have another income coming in from a partner, and I didn’t know if I’d have a job after each contract was up,” she says. “I decided that I needed something else.”

She continues: “Instead of the hamster wheel of always trying to find funding for the next project, I had a steady income. So that is something to ask yourself — how much of the decision is about finances? On the managerial path, you end up making more money.”

But it’s not for everyone. “As a manager, you have responsibility over the livelihoods of the people on your team,” Hunt stresses. “They need you to be their guide. It’s a tricky role.” The best bosses, she says, are the ones who are able to teach without demeaning, learn from the people who work for them and act as mentors to their teams. If you can do that, you might find management very fulfilling.

Hunt doesn’t regret taking the leap, but leaving the lab involved some sacrifice.

“I will say that I loved working in the lab. I missed the high of being a player in the whole movement of knowledge,” says Hunt. “When you leave the bench, you’re still part of that movement, but in a different way. You get a different perspective on the field.”

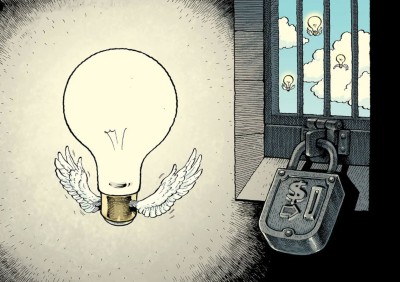

How can I publish open access when I can’t afford the fees?

She has used that perspective to draw connections. The company she now works for is not in the business of research and development, but Hunt is using knowledge and connections from her past work to get the firm involved in research projects, kick-starting collaborations with research groups and introducing the company to funding opportunities with the US Department of Energy. These kinds of project helped her to recover some of the thrill and feeling of making a contribution that she loved about lab work. “It’s exciting to help bridge the gap between the technology of the future and the actual industry of today,” she says.

All three advice-givers agree that there is no shame in pausing to recalibrate or change direction. “Careers are rarely linear,” says Wright. “Lives change, circumstances change, we change and, if we want to be both successful and happy, our careers change with us.”

In Wright’s years of running career-development workshops, the panellists she has hosted have come from a wide array of scientific backgrounds and diverse career paths. But they tend to offer a certain piece of advice in common, she says. “It always strikes me how the main piece of advice is to follow what makes you happy, what you love doing. As scientists, we all have that passion. Make that first move, try something new, follow your passion and you will land on your feet.”

[ad_2]

Source link