[ad_1]

Hello Nature readers, would you like to get this Briefing in your inbox free every week? Sign up here.

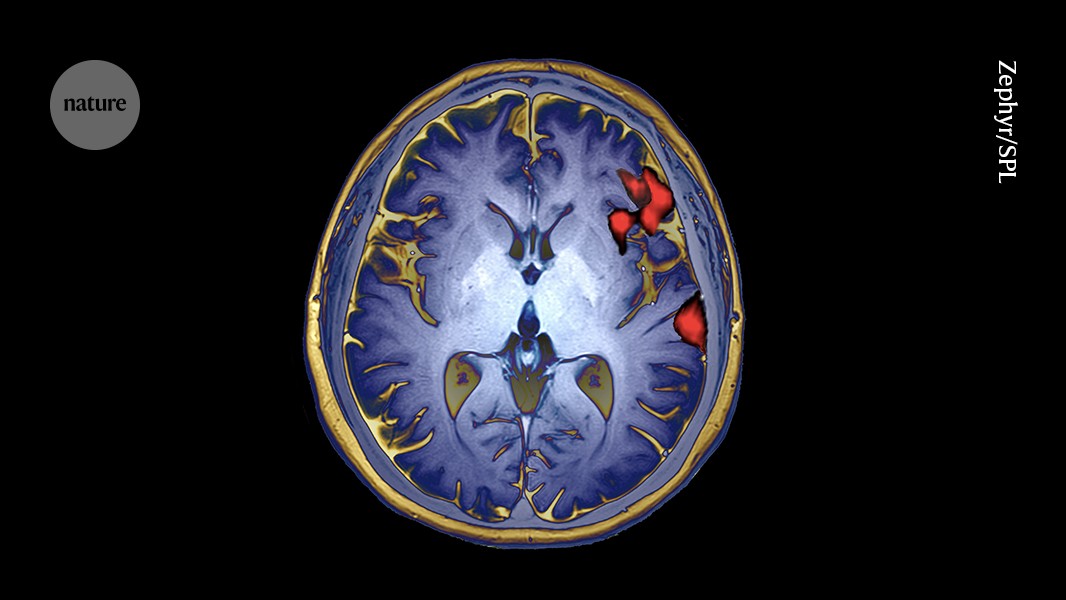

Medical imaging shows brain activity during speech production (artificially coloured).Credit: Zephyr/SPL

An AI-powered brain implant has helped, for the first time, a paralysed bilingual person speak in both their languages. The AI system decodes the man’s neural patterns and interprets the language he’s using — English or his native Spanish — based partly on which combination of words makes the most sense. “What languages someone speaks are actually very linked to their identity,” says neurosurgeon and study co-author Edward Chang. “Our long-term goal has never been just about replacing words, but about restoring connection for people.”

Nature | 5 min read

Reference: Nature Biomedical Engineering paper

Researchers are racing to create their own versions of DeepMind’s revolutionary protein-structure-prediction AI AlphaFold3, which was released without its code, to the frustration of many scientists. “It would be bad if capabilities that are just so fundamental to our ability to do drug discovery… end up getting locked up,” says computational biologist Mohammed AlQuraishi, whose team hopes to complete an ‘OpenFold3’ this year. Computational biophysicist David Baker’s team is adapting a version of their open-fold algorithm RoseTTAFold, and independent software engineer Phil Wang has begun a crowdsourced replica of AlphaFold3. Responding to the backlash, DeepMind has promised to release the code by year’s end.

Nature | 6 min read

Reference: Nature Methods paper

The Chinese-made chatbot ChatGLM performs as well as ChatGPT on many measures and even outperforms it in Chinese, say its creators. Models that are tailored to different languages avoid “oversimplifying or neglecting the specific characteristics of certain languages and cultures”, says machine-learning specialist Adina Yakefu. Although ChatGPT and many of its rivals can respond in a variety of languages, most of them are built by US companies and mainly use English. By contrast, ChatGLM is designed to work in both Chinese and English.

Nature | 7 min read

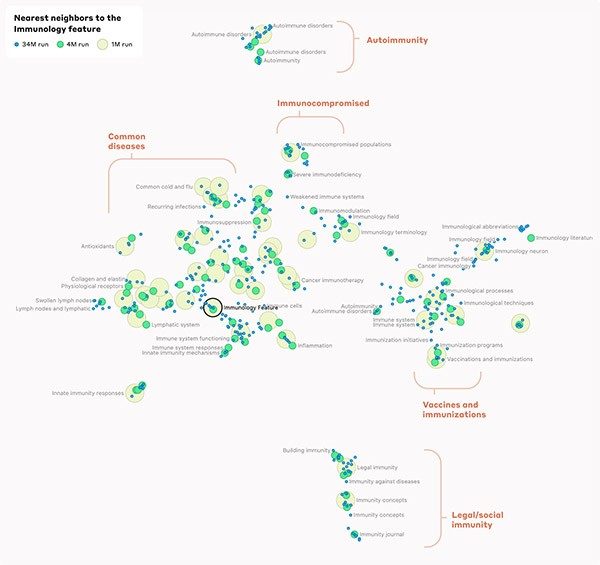

Infographic of the week

Templeton et al, Anthropic PBC

Researchers from AI company Anthropic have uncovered how more than 10 million concepts are represented as ‘neuron’ activation patterns inside the large language model Claude Sonnet. This map (see higher-resolution version) is one example. It shows how the chatbot correlates immunology with immunosuppression, vaccines, specific diseases and even concepts such as legal immunity. This suggests that the AI model’s internal organization somewhat corresponds to our human notion of similarity. (The New York Times | 5 min read)

Reference: Anthropic preprint (not peer reviewed) & accompanying analysis

Features & opinion

Neuroscientists Mackenzie and Alexander Mathis met as graduate students, got married and, together with colleagues, created the blockbuster motion-tracking tool DeepLabCut. The tool uses a machine-learning algorithm to track a lab animal’s body parts in videos, without the use of intrusive markers. It’s been used to study behaviour in everything from fruit flies to horses — but animals that camouflage themselves create unique challenges. “There was a student who had tweeted some videos of octopus in the Red Sea — as a human, you couldn’t even see the octopus until it moved,” Mackenzie Mathis says. “That was quite amazing to see.”

Nature | 8 min read

The chimera of empathy in AI systems is like an optical illusion: it persists even when we rationally know better, says a group of psychologists, philosophers and computer scientists. Repeated interactions with seemingly empathic AI models could threaten one of our core values of being in touch with reality, the researchers write. “It is worth remembering that beings that have the capacity to care should also have the capacity to withdraw their care.”

Nature Machine Intelligence | 6 min read

“Our working conditions amount to modern-day slavery,” write almost 100 content moderators and data labellers in Kenya who are contracted by US tech giants such as Meta and OpenAI. Their work often involves screening hours of harmful content — usually for less than US$2 per hour. There is insufficient mental-health support and companies engage in union-busting practices, the workers say. They are asking US President Joe Biden to ensure US companies can be held accountable for their unlawful operations abroad, in particular for their human rights and labour violations.

Today, I’m getting cute aggression watching a fluffy version of Boston Dynamic’s robot dog Spot do an adorable little dance.

Your feedback (written, or in the form of interpretive dance) is always welcome at [email protected] for reading,

Katrina Krämer, associate editor, Nature Briefing

With contributions by Sarah Tomlin

Want more? Sign up to our other free Nature Briefing newsletters:

• Nature Briefing — our flagship daily e-mail: the wider world of science, in the time it takes to drink a cup of coffee

• Nature Briefing: Microbiology — the most abundant living entities on our planet – microorganisms — and the role they play in health, the environment and food systems.

• Nature Briefing: Anthropocene — climate change, biodiversity, sustainability and geoengineering

• Nature Briefing: Cancer — a weekly newsletter written with cancer researchers in mind

• Nature Briefing: Translational Research covers biotechnology, drug discovery and pharma

[ad_2]

Source link

Leave a Reply